« Storage

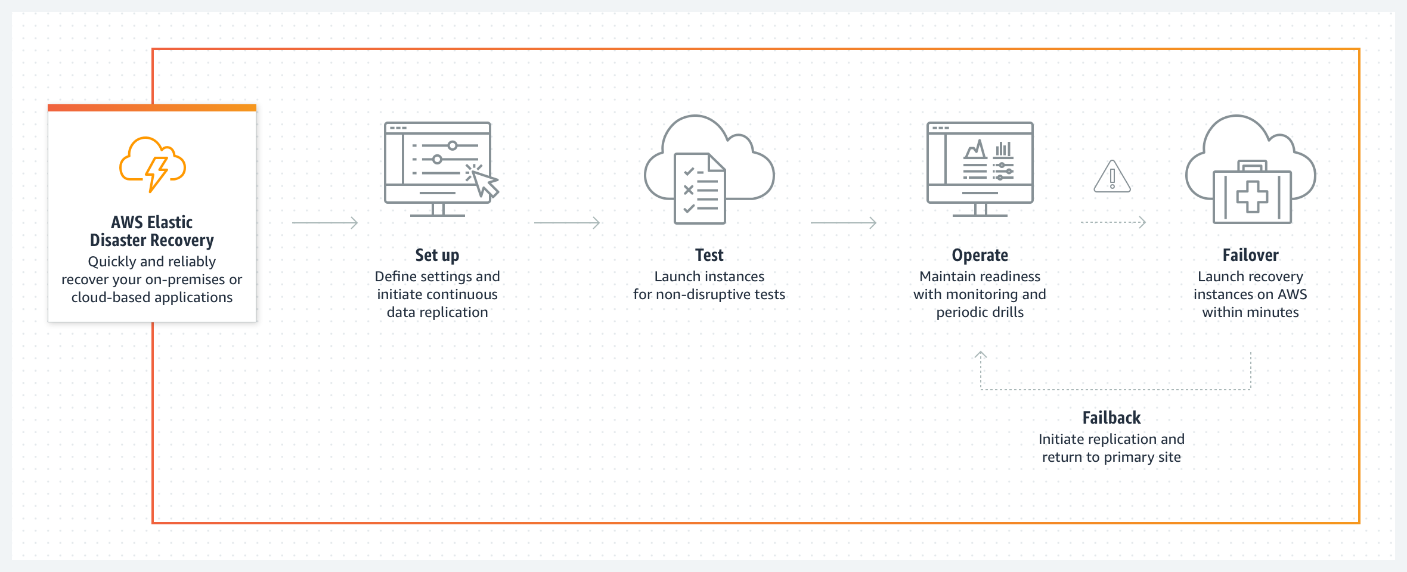

AWS Elastic Disaster Recovery

Scalable, cost-effective application recovery to AWS

Save costs by removing idle recovery site resources, and pay for your full disaster recovery site only when needed.

Recover your applications within minutes, at their most up-to-date state or from a previous point in time.

Use a unified process to test, recover, and fail back a wide range of applications, without specialized skillsets.

Automate actions such as configuring your environment, cleaning up drill resources or activating monitoring tools on launched instances.

How it works

Use cases

Cloud to AWS

Help increase resilience and meet compliance requirements using AWS as your recovery site. AWS DRS converts your cloud-based applications to run natively on AWS.

AWS Region to AWS Region

Increase application resilience and help meet availability goals for your AWS-based applications, using AWS DRS to recover applications in a different AWS Region.

Customers

How to get started

Get started with AWS Elastic Disaster Recovery

Start replicating your servers to AWS.

Learn more about pricing

Discover how to reduce costs with AWS as your recovery site.

Explore online training

Learn how to set up and operate AWS Elastic Disaster Recovery.